Milica Panić

Milica is a marketing professional with over 10 years in the field. As TAUS Head of Product Marketing she manages the positioning and commercialization of TAUS data services and products, as well as the development of taus.net. Before joining TAUS in 2017, she worked in various roles at Booking.com, including localization management, project management, and content marketing. Milica holds two MAs in Dutch Language and Literature, from the University of Belgrade and Leiden University. She is passionate about continuously inventing new ways to teach languages.

by Milica Panić

by Milica Panić03/06/2021

Ideal use cases for when to community-source training data for ML and common misconceptions around these data acquisition models.

by Milica Panić

by Milica Panić04/05/2021

Here's a brief look at the market for language data and how to define the right pricing for datasets.

by Milica Panić

by Milica Panić01/04/2021

Here are some considerations for ethics in AI and four tips on how to avoid data bias in AI training.

by Milica Panić

by Milica Panić26/03/2021

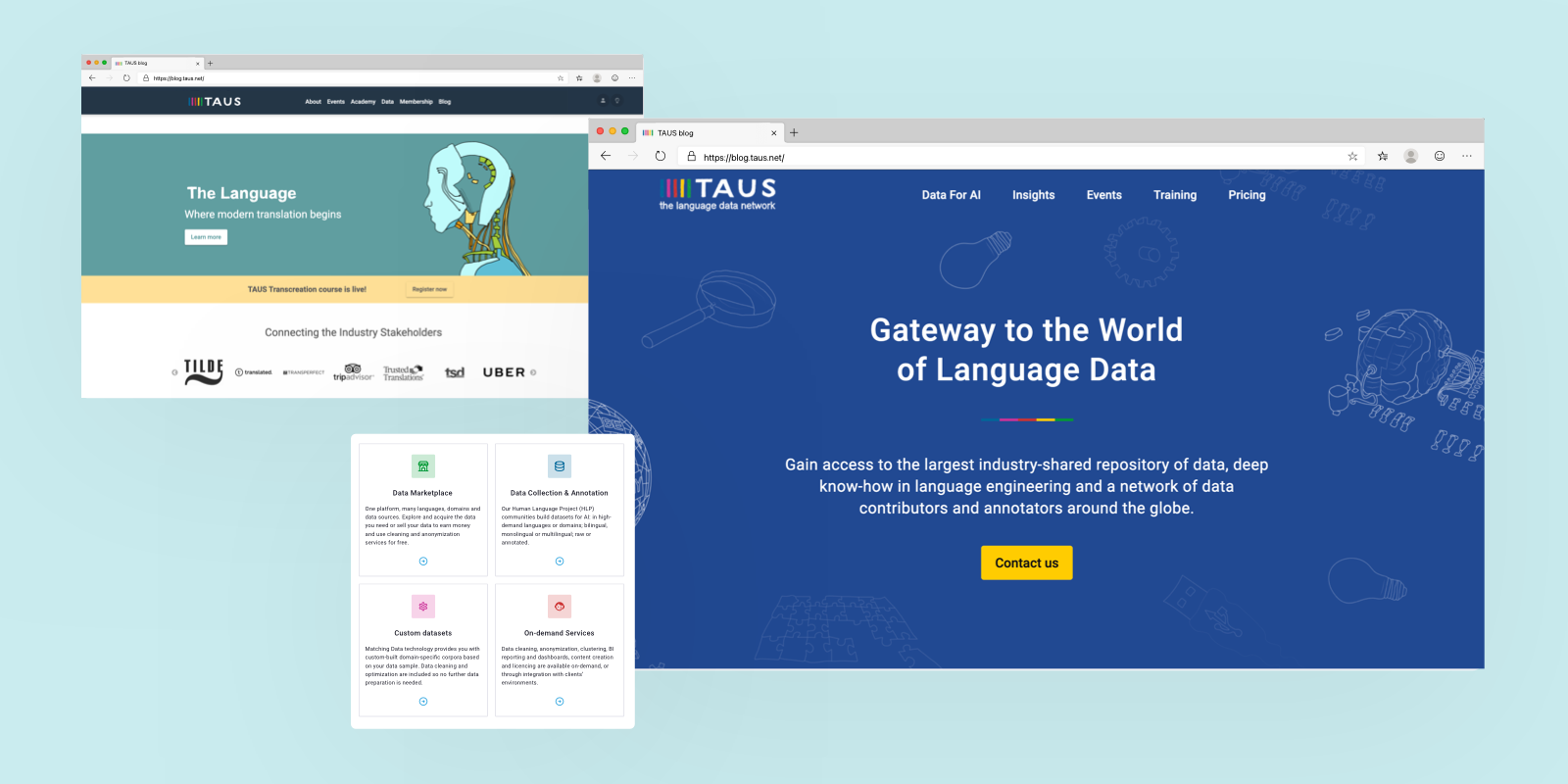

Along with its value offering, TAUS has gone through a series of brand and website updates. We're excited to share what those updates mean and why we made them.

by Milica Panić

by Milica Panić26/11/2020

Here is a step by step guide to publishing your language data on the TAUS Data Marketplace.

by Milica Panić

by Milica Panić18/11/2020

We share some of the main challenges that we’ve faced while setting up the very first marketplace for language data for AI - the TAUS Data Marketplace.

by Milica Panić

by Milica Panić22/07/2020

Automatic evaluation of Machine Translation (MT) output refers to the evaluation of translated content using automated metrics such as BLEU, NIST, METEOR, TER, and CharacTER.

by Milica Panić

by Milica Panić06/05/2020

How to best implement machine translation and what factors to pay attention to?

by Milica Panić

by Milica Panić05/03/2020

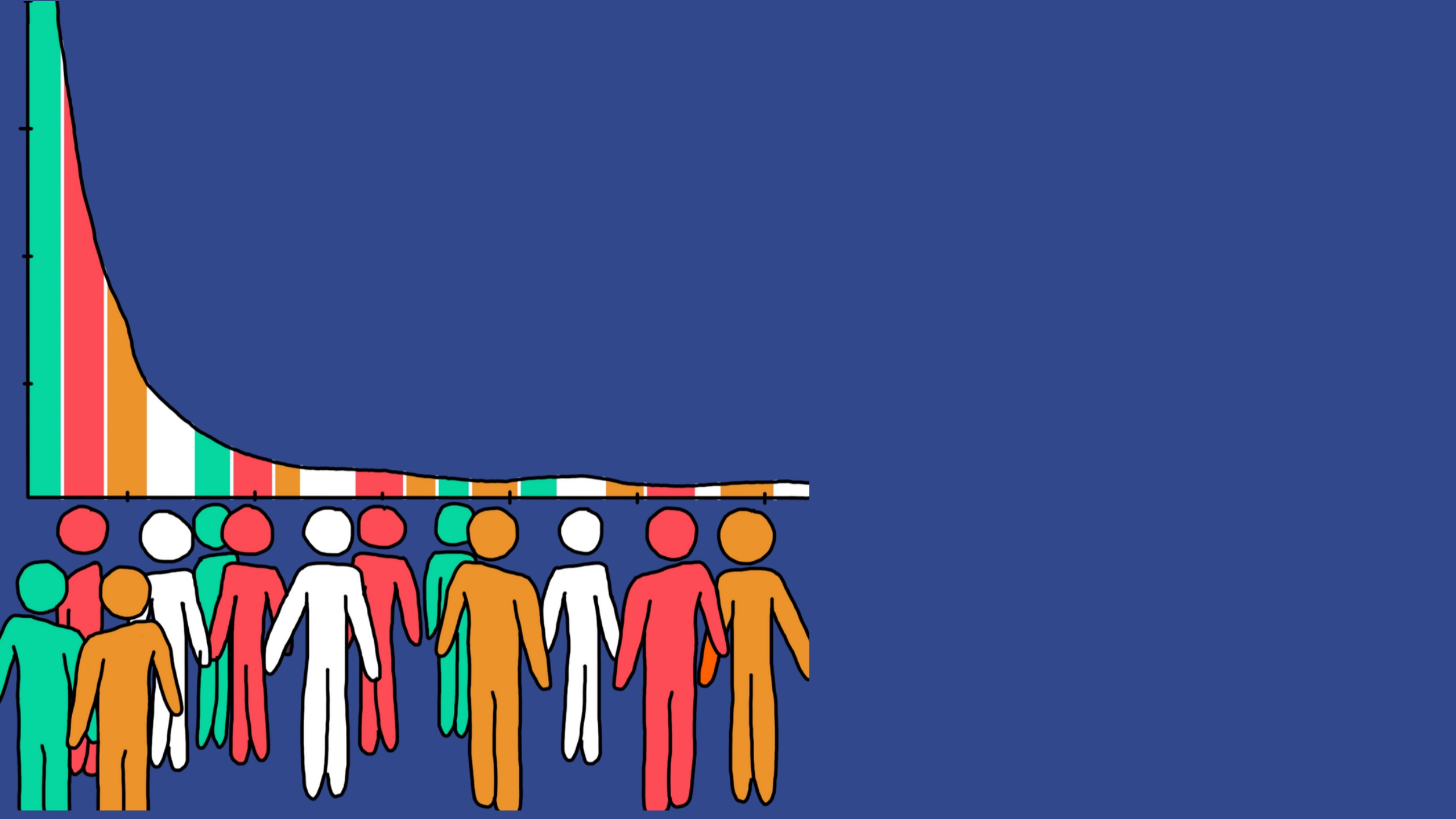

As machine translation for low-resource languages becomes more popular the need for low-resource language data becomes critical. Here's why.

by Milica Panić

by Milica Panić03/03/2020

Data are the key to process improvements, quality control, and automation, and they can be collected in a GDPR-compliant way. Learn how TAUS DQF treats your personal data.

by Milica Panić

by Milica Panić26/02/2020

Let's investigate copyright scenarios in a typical translation supply chain, who owns language data and define data ownership in translation.

by Milica Panić

by Milica Panić07/02/2020

What is TAUS DQF? What does DQF stand for? Everything you want to know about the Dynamic Quality Framework.

by Milica Panić

by Milica Panić07/10/2019

We've run an in-domain data experiment in the WMT Workgroup to measure the effectiveness of domain-specific training data. TAUS Matching Data corpora performed strongly across all language pairs and proved that fine tunning the data brings a guaranteed BLEU score improvement.

by Milica Panić

by Milica Panić25/09/2019

The TAUS Partner Foundation Board brings together the largest stakeholders in the sector to accelerate growth and boost the value of global content and communications. With Unbabel joining, the board now has nine corporate members.

by Milica Panić

by Milica Panić17/07/2019

How do we prepare for the changes to come? Shared from 3 perspectives: buyer, LSP, technology provider. Divided into 3 gaps: knowledge, operational, data.

by Milica Panić

by Milica Panić14/05/2019

Where can I source parallel language data? What are the methods to find language data for MT engine training? We listed them for you!

by Milica Panić

by Milica Panić08/04/2019

TAUS Summits continue to look into the future of the global content, this time in New York. Here is your briefing on what leading players of the translation and localization industry have to say on how to reach the next billion users with global content.

by Milica Panić

by Milica Panić15/03/2019

Highlights of the TAUS Global Content Summit Amsterdam on March 6, 2019 covered a vast range of topics from the importance of the data layer in storytelling to human parity.

by Milica Panić

by Milica Panić15/01/2019

In a PhD study whose goal was to set up a method for evaluating the quality of machine translation output and for judging its readiness for use in production, DQM-MQM turns out to be an invaluable addition to automatic MT quality metrics.

by Milica Panić

by Milica Panić06/11/2018

Highlights from the TAUS QE Summit 2018: This report is meant to highlight some new and old translation quality related challenges and potential solutions around the four main topics: business intelligence, user experience, risk and expectation management and DQF Roadmap planning.