dynamic quality framework

by Dace Dzeguze

by Dace Dzeguze05/03/2020

It's now possible for DQF users on SDL Trados Studio to send metadata-only and still be able to generate detailed quality evaluation reports on the DQF Dashboard.

by Milica Panić

by Milica Panić03/03/2020

Data are the key to process improvements, quality control, and automation, and they can be collected in a GDPR-compliant way. Learn how TAUS DQF treats your personal data.

by Dace Dzeguze

by Dace Dzeguze25/02/2020

What is quality assurance? How can you do translation quality assurance in the most efficient and data-oriented way?

by Milica Panić

by Milica Panić07/02/2020

What is TAUS DQF? What does DQF stand for? Everything you want to know about the Dynamic Quality Framework.

by Dace Dzeguze

by Dace Dzeguze11/07/2019

The DQF plugin for productivity and quality tracking in SDL Trados Studio is the most popular DQF integration. Here are the six reasons why.

by Milica Panić

by Milica Panić15/01/2019

In a PhD study whose goal was to set up a method for evaluating the quality of machine translation output and for judging its readiness for use in production, DQM-MQM turns out to be an invaluable addition to automatic MT quality metrics.

by Şölen Aslan

by Şölen Aslan14/01/2019

The DQF data accumulated and processed over the years has reached the point where it can be used to inform strategic business decisions. The stats from that data will be shared quarterly as a Business Intelligence Bulletin to inform the translation and lozalization industry.

07/01/2019

Close to 200 million words have been processed by DQF in the past year. Thousands of translators and reviewers have DQF plugged into their work environment. Now with BI Bulletins, Confidence Score, MY DQF Toolbox and DQF Reviewer, it is bringing the industry one step closer to ficing the operational gap.

by Dace Dzeguze

by Dace Dzeguze13/08/2018

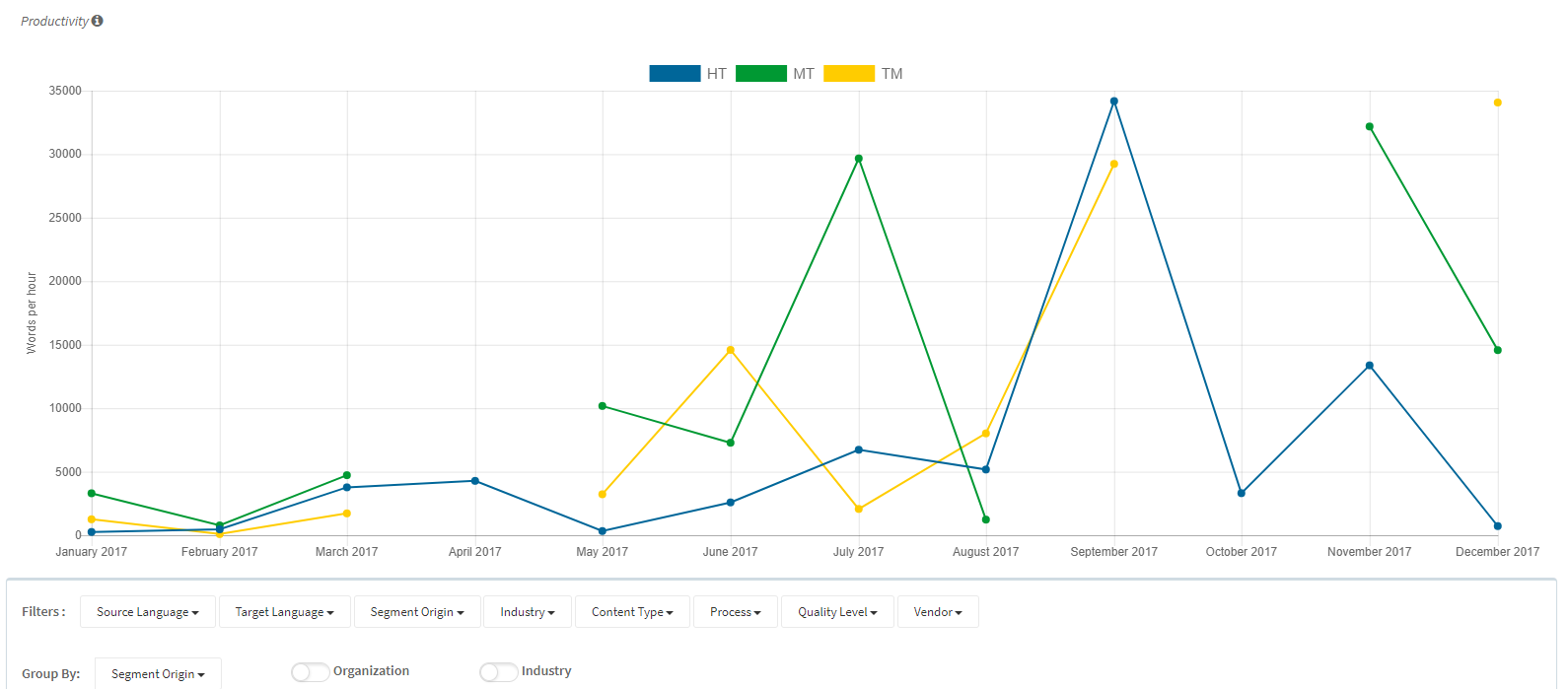

Since July 2018, the TAUS Quality Dashboard features the first set of trend reports on translation productivity and correction density. In this post, we list the benefits that DQF (Dynamic Quality Framework) API users can get out of the TAUS trend reports.

by Milica Panić

by Milica Panić08/08/2018

Use Case: Dell-EMC produces high volumes of translated content – more than 200 million words in more than 34 languages and dialects annually using four different vendors. Since July of 2017, 78 million words have run through the DQF integration, with plans to have all volume flowing through the integration within the next year.

by Milica Panić

by Milica Panić16/07/2018

With machine translation and productivity challenges, LSPs need to become data owners and risk brokers, similarly to insurance companies. Managing your business means managing your data. If you collect and analyze productivity data, you can manage the risks of low productivity and profitability.

by Attila Görög

by Attila Görög23/06/2017

In this blog post we will highlight some of the standards and metrics used in translation quality management.

23/06/2017

Error Typology is a venerable evaluation method for content quality. In this blog post Kirill Soloviev describes how to use it.

by Attila Görög

by Attila Görög08/10/2015

The TAUS efficiency score replaces traditional productivity measurement as it can be applied to every form of translation.